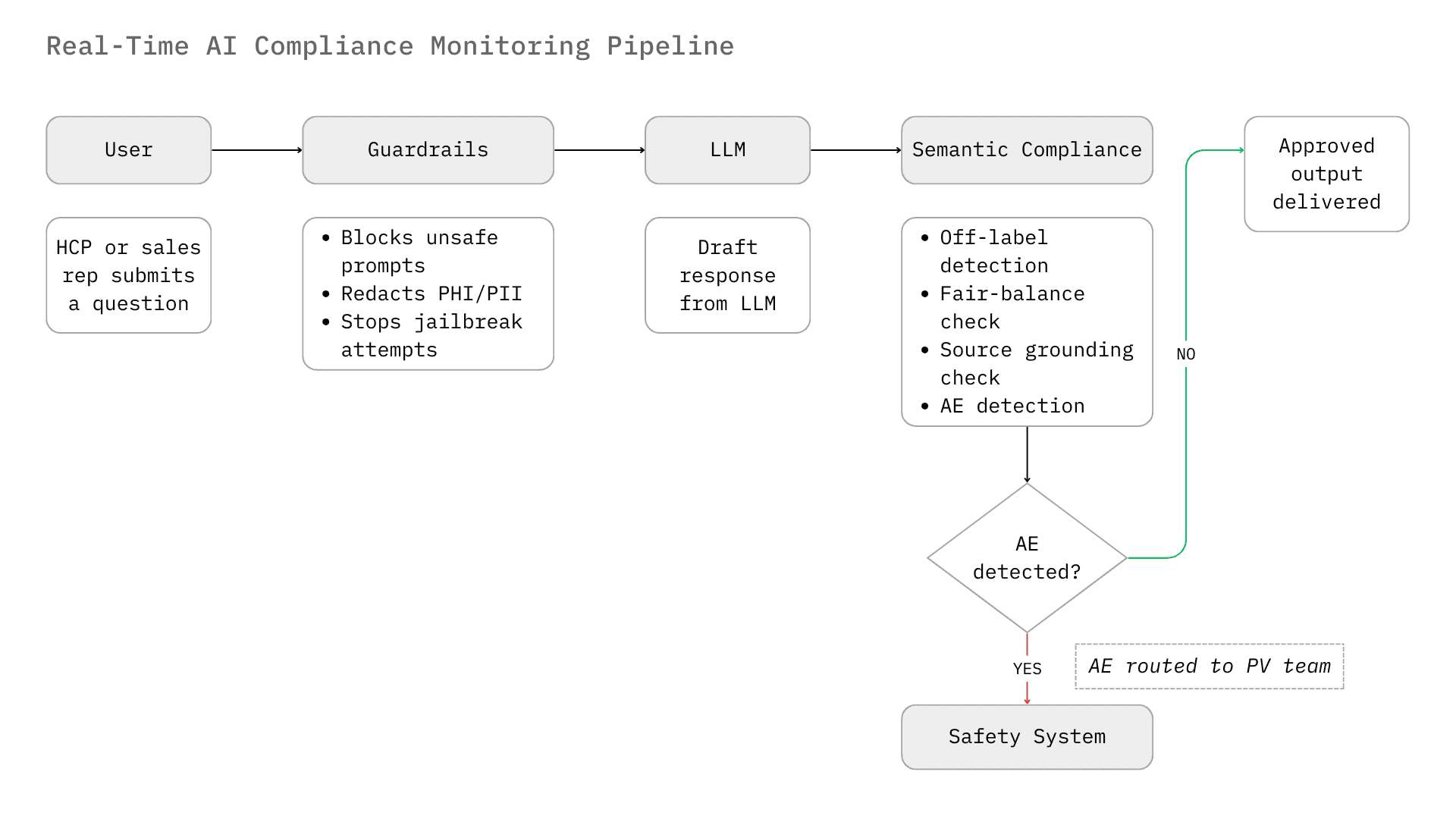

AI compliance monitoring in pharma operations has two distinct surfaces. The first is the transactional surface: every HCP interaction, every transfer of value, every grant decision and program execution event, continuously read and classified against policy. The second is newer and less governed: what happens when a generative AI system speaks on a pharma company's behalf. A sales copilot drafts a pre-call plan. An HCP chatbot answers a dosage query in real time. Neither waits for MLR review. Neither produces a deterministic output. Both are now enforcement surfaces.

This post covers that second surface. AI output compliance monitoring, the real-time surveillance of what AI systems generate within pharma commercial and medical workflows. It is the specialised sub-layer of Watch: the capability within a compliance intelligence platform that reads AI outputs the same way Watch reads human transactions continuously, in context, with policy citations in the audit log. For the complete six-capability compliance platform framework (Watch, Know, Build, Guide, Run, and Report), see our guide to AI-powered compliance platforms for life sciences.

Zelthy is an AI-native compliance intelligence platform for life sciences, providing 100% transaction coverage across field operations, HCP engagement, PSP workflows, and promotional review, deployed in 4–8 weeks. Trusted by Roche, Bristol-Myers Squibb, AstraZeneca, and Novo Nordisk across 12+ countries, with 75+ pre-built compliance use cases in production.

Why AI outputs have become a compliance surface that MLR cannot cover

The pharmaceutical industry is navigating a pivotal transformation driven by two forces colliding: the rapid adoption of Generative AI across commercial and medical workflows, and an increasingly aggressive regulatory enforcement landscape targeting digital communications. As pharmaceutical companies deploy Large Language Models to power sales team co-pilots and HCP-facing chatbots, they confront a structural paradox. The stochastic nature of GenAI, its inherent ability to generate novel, probabilistic responses, conflicts directly with the deterministic rigidity of pharmaceutical compliance, where every claim must be substantiated, every risk disclosed, and every adverse event reported.

This post covers the AI output compliance monitoring landscape, from infrastructure-layer guardrails to agentic compliance systems, and frames where each layer fits within a unified operational compliance platform. The focus is two high-stakes use cases: Sales Team Chatbots (internal tools assisting representatives with pre-call planning and content retrieval) and HCP Chatbots (external interfaces on drug websites providing medical information). Both are Watch layer problems. Both require the same architectural answer: AI reading AI outputs in real time, with policy-grounded audit trails.

The drivers are existential. In September 2025, the FDA's Office of Prescription Drug Promotion issued nearly 100 enforcement letters in a single week, the agency's most aggressive enforcement action in decades, and confirmed it had deployed its own AI tools to surveil promotional content at scale. The enforcement surface is now 100% of AI outputs. The review surface, at most pharma companies, is still a sample (Hall Render, 2025).

The regulatory imperative: why rules engines are breaking down

The deployment of conversational AI in life sciences represents a fundamental shift in the enterprise risk surface. Traditional digital assets, such as static websites, detail aids, and email templates, are deterministic. They undergo rigorous Medical, Legal, and Regulatory (MLR) review before dissemination. Chatbots and conversational agents, however, are dynamic. They generate responses probabilistically in real time, effectively bypassing the pre-approval containment mechanisms that have defined pharma compliance for decades. This shift necessitates a move from pre-approval review to real-time monitoring.

The FDA OPDP and the "Hallucination" Liability

The FDA's Office of Prescription Drug Promotion (OPDP) is the primary arbiter of truthful and non-misleading promotion in the United States. The integration of AI chatbots introduces a unique liability: hallucination-driven misbranding. If an HCP chatbot on a drug website, driven by an LLM, fabricates a clinical study result or suggests efficacy in an unapproved indication (off-label), the manufacturer is strictly liable for misbranding under the Federal Food, Drug, and Cosmetic Act (FD&C Act).¹

Recent enforcement trends indicate that the FDA is modernizing its oversight capabilities to match the industry's technological adoption. The agency has explicitly committed to leveraging "AI and other tech-enabled tools" to proactively surveil promotional activities, with a specific focus on "AI-generated health content and chatbot interactions".¹ This development creates a technological arms race; regulators are using AI to detect violations, compelling pharmaceutical companies to deploy superior AI compliance monitoring to prevent them.

The FDA's focus has expanded to "closing digital loopholes," explicitly mentioning algorithm-driven targeted advertising and chatbot interactions as areas of renewed scrutiny.² This is not a theoretical risk; in September 2025, the FDA issued a flurry of enforcement letters, signaling a departure from the "overly cautious approach" of previous years and a return to the aggressive enforcement paradigms of the late 1990s.³ The agency's use of AI to scan vast troves of digital content means that the probability of detection for non-compliant chatbot outputs is approaching 100%.

For teams managing submissions and regulatory intelligence across jurisdictions, Zelthy's regulatory operations platform tracks requirement changes across health authorities and automates dossier preparation.

The "Fair Balance" and "Major Statement" Challenge

In traditional media, "fair balance" (the presentation of risk information comparable to benefit claims) is structural. A print ad has a "brief summary" page; a TV spot has a rolling "major statement." In a chatbot conversation, fair balance must be temporal and contextual.

If a sales rep's internal chatbot suggests a talking point about efficacy, it must simultaneously surface the relevant safety warnings. If an HCP chatbot answers a query about dosage, it cannot omit contraindications. Compliance monitoring systems for these interfaces must possess Contextual Awareness. They cannot simply scan for keywords; they must evaluate the gestalt of the conversation to ensure that the risk profile is presented with "equal prominence" to the benefit profile.³

The complexity of this task is compounded by the "Clear, Conspicuous, and Neutral" (CCN) standards finalized by the FDA in 2023.¹ A chatbot response that buries risk information in a dense paragraph or a hyperlink may fail the CCN standard. The monitoring AI must assess the readability and prominence of the safety information within the chat stream, ensuring that the "major statement" is not just present, but effectively communicated. The FDA's move to eliminate the "adequate provision loophole"—which previously allowed broadcast ads to reference a website for detailed risks—suggests that chatbots will be held to a standard where safety information must be integral to the interaction, not offloaded to a secondary source.²

Pharmacovigilance (PV) and the Unstructured Data Deluge

Perhaps the most critical operational risk in pharma AI deployment is the detection of Adverse Events (AEs). Pharmaceutical companies are legally mandated to report AEs to health authorities (FDA, EMA, PMDA) within strict timelines (e.g., 15 days for serious, unexpected events).⁴

Chatbots interacting with HCPs or patients inevitably solicit health data. A patient might type, "I took your drug and felt dizzy." A standard LLM might respond empathetically. A compliant system must recognize "dizziness" as a potential AE, classify it, capture the reporter's details, and route the unstructured text to the safety database (e.g., Argus, ArisG) for processing.⁵

Failure to capture an AE mentioned in a chatbot conversation constitutes a significant compliance violation. The market for AI monitoring in this sector is driven by the need to automate this "intake and triage" process. Manual review of every chat log is economically unfeasible given the volume of interactions. Therefore, companies require AI monitoring solutions with high sensitivity (recall) to ensure that no potential safety signal is missed.⁷ This is distinct from standard "toxicity" monitoring; an AE description is often benign in tone but critical in regulatory weight.

The "Black Box" Trust Deficit

The pharmaceutical industry harbors a profound distrust of "Black Box" AI. A recent survey revealed that 65% of pharma marketers distrust AI for creating regulatory submissions due to concerns over hallucinations and lack of traceability.⁹ This sentiment drives the technical requirements for compliance monitoring systems. They cannot merely be filters; they must be auditable systems of record.

Monitoring systems must provide a "Glass Box" view. It is not enough to block a response; the system must log why it was blocked, citing the specific business rule or regulatory statute. This creates an immutable audit trail necessary for responding to FDA inquiries or Department of Justice (DOJ) investigations.¹⁰ The demand is for "Explainable AI" (XAI) that can justify its decisions—e.g., "Blocked response because it implied efficacy in a pediatric population, which contradicts Section 4.1 of the USPI."

The compliance challenges described here apply across every AI-powered pharma workflow, including patient support. Our analysis of how AI is transforming PSPs in 2026 covers the operational side of this shift.

Continuous Compliance Monitoring: The Three-Layer AI Architecture

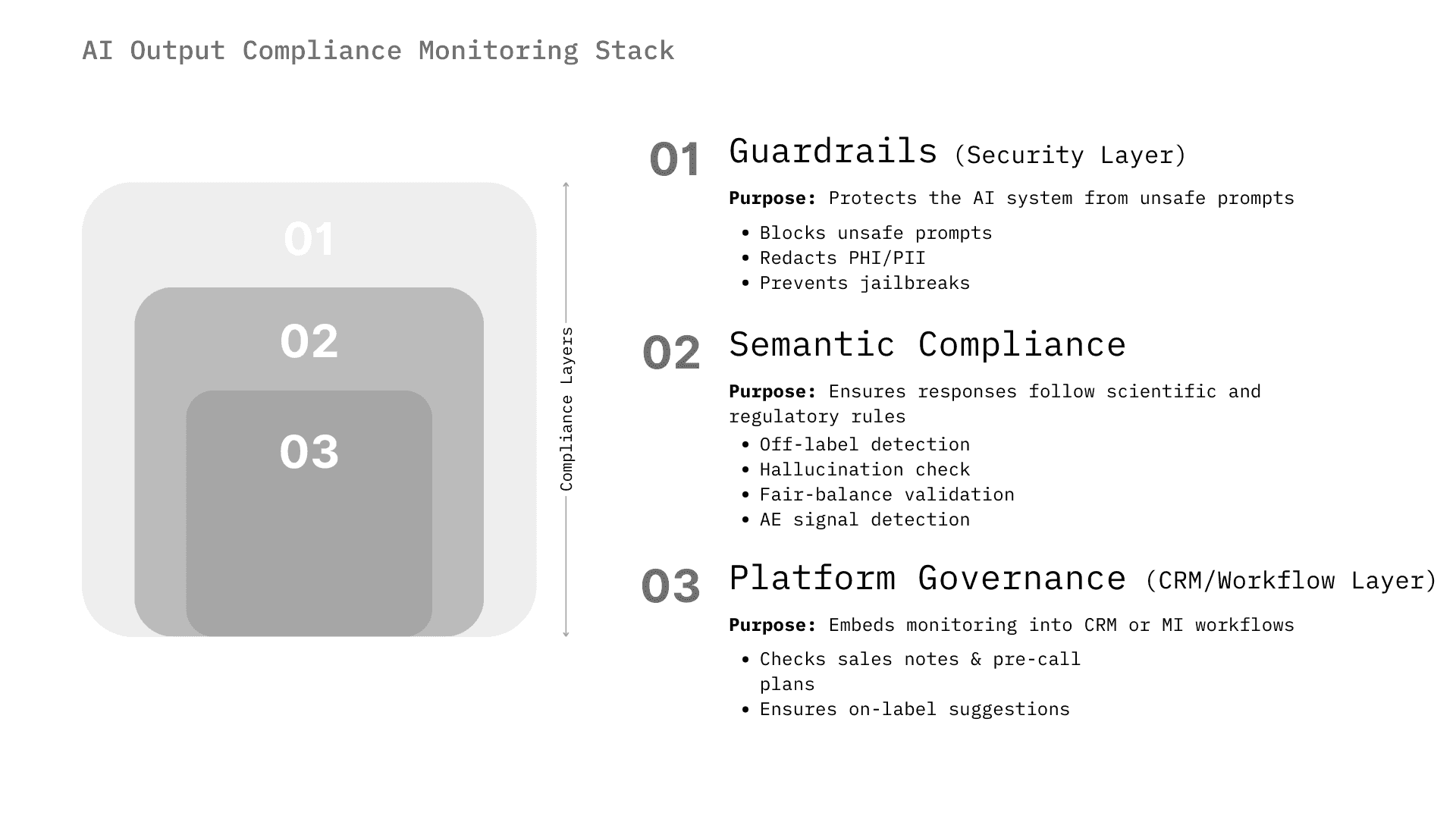

The market for AI compliance monitoring is not monolithic. It spans from infrastructure-level security (preventing prompt injection) to high-level semantic analysis (detecting off-label intent). We categorize the solutions into three distinct architectural layers that companies are assembling to create a "defense-in-depth" strategy.

Layer 1: Real-Time Interaction Guardrails (The Firewall)

This layer operates at the point of inference. It intercepts the user's prompt before it reaches the LLM and intercepts the LLM's response before it reaches the user. It is the first line of defense, focused on security and basic safety.

- Function: Blocks PII (Personally Identifiable Information), prevents prompt injection attacks (jailbreaking), and filters toxic content. In pharma, this layer is critical for HIPAA/GDPR compliance (redacting patient names) and preventing the model from acting outside its operational design domain (ODD).

- Key Players: Lakera, Guardrails AI, NVIDIA NeMo Guardrails, Amazon Bedrock Guardrails.

- Pharma Relevance: While these are horizontal tools, they are essential for the foundational security of pharma AI. For instance, Lakera Guard is used to prevent data leakage and prompt injections, ensuring that internal sales chatbots do not reveal sensitive competitive intelligence or patient data to unauthorized users.¹¹ The ability to detect "jailbreak" attempts (e.g., a user trying to trick the bot into prescribing medication) is a critical security feature for external-facing HCP bots.¹³

- Latency Constraint: Because this layer sits in the live chat path, it must operate with sub-50ms latency to avoid degrading the user experience. Solutions like Lakera emphasize their low-latency architecture as a key differentiator for real-time deployment.¹⁴

Layer 2: Semantic & Regulatory Agents (The Auditor)

This is the highest value segment for pharma. These systems run in parallel or post-hoc to analyze the content of the interaction against specific regulatory frameworks (e.g., 21 CFR Part 202, PhRMA Code).

- Function: Detects off-label promotion, checks for fair balance, verifies citations against the Approved Product Labeling (USPI/SmPC), and identifies potential AEs. This layer understands medical context. It knows that "progression-free survival" is a valid endpoint but "cure" is likely a violation.

- Key Players: Sorcero, Norm.ai, WhizAI, Aktana, ZoomRx (Ferma).

- Pharma Specificity: High. These tools are trained on biomedical ontologies (MedDRA, SNOMED) and regulatory corpora. Sorcero, for example, utilizes "medically-tuned AI" to detect "Prohibited Intent" and ensure responses are grounded in scientific literature, specifically targeting Medical Affairs use cases.¹⁵ Norm.ai takes a "Compliance as Code" approach, converting regulations into "AI Agents" that can autonomously audit content against laws like the FD&C Act.¹⁰

- Mechanism: These systems often use Retrieval Augmented Generation (RAG) validation. They check if the AI's output is supported by the retrieved context chunks (the "Grounding" check). If the AI makes a claim that is not present in the referenced medical document, the Semantic Agent flags it as a hallucination.¹⁸

Layer 3: Platform-Native Governance (The Ecosystem)

Major life sciences platforms are embedding compliance monitoring directly into their CRMs and content management systems, viewing compliance as a feature rather than a separate product.

- Function: Integrated monitoring within the workflow of sales reps and medical science liaisons (MSLs). This involves checking emails, call notes, and chat interactions within the proprietary "walled garden" of the CRM.

- Key Players: Veeva Systems (Vault CRM), Salesforce (Life Sciences Cloud), IQVIA (Orchestrated Customer Engagement).

- Market Impact: This exerts consolidation pressure. If Veeva provides a "Free Text Agent" that automatically scans sales notes for off-label claims, the need for a third-party monitoring tool diminishes for Veeva customers.¹⁹ Similarly, Salesforce's Einstein Trust Layer provides a native "zero retention" architecture that handles toxicity and hallucination detection for its Life Sciences Cloud users.²⁰

The AI output compliance monitoring ecosystem operates across three architectural layers:

- Layer 1 (Real-Time Guardrails) provides infrastructure-level security, blocking PII leakage, preventing prompt injection, and filtering toxic content through solutions like Lakera Guard and NVIDIA NeMo Guardrails, operating at sub-50ms latency in the live chat path.

- Layer 2 (Semantic and Regulatory Agents) provides the highest-value pharma-specific analysis, detecting off-label promotion, verifying citations against approved labeling (USPI/SmPC), checking fair balance, and identifying adverse events through vendors like Sorcero, Norm.ai, and WhizAI using biomedical ontologies (MedDRA, SNOMED).

- Layer 3 (Platform-Native Governance) embeds compliance directly into CRM and content management workflows through incumbents like Veeva Systems and Salesforce, aiming to commoditize monitoring as a native feature.

Where the assembled stack falls short

The limitation of assembling these three layers from separate vendors is auditability. Each layer logs in its own system. When an enforcement inquiry arrives, reconstructing the full chain, what was asked, what the LLM generated, what was blocked and why, what was routed to the PV team, across three vendor systems is a significant operational exercise.

A unified Watch layer integrates AI output monitoring within a single governed data architecture, the same system that monitors HCP transactions, field operations, and PSP workflows. Every flagged AI output cites the specific SOP version in the same audit log that holds every other compliance record. The Glass Box standard becomes operationally achievable when monitoring is consolidated, not federated.

Zelthy's compliance platform implements this: Watch agents cover both transactional operational data and AI-generated content, under a single audit architecture and policy corpus.

AI Compliance Monitoring Across Pharma Operations: What a Unified Platform Covers

The three-layer AI architecture described above governs a specific problem: what happens when an LLM generates a response. But AI compliance monitoring in pharma operations extends well beyond chatbot outputs. For most compliance officers, the higher-volume, higher-risk surface is the one that predates generative AI entirely — field force conduct, HCP engagement patterns, PSP transaction flows, and the regulatory changes that should be triggering SOP updates but often aren't, because no system is watching.

This is the operational compliance gap: most pharma companies review 2–5% of transactions. Regulators enforce against 100%.

Closing it requires a different kind of AI monitoring — one that reads every transaction in context, not just the outputs a chatbot produces.

Field force and HCP conduct monitoring

Field force compliance failures rarely come from individual bad actors. They come from patterns; a speaker program whose attendee list has drifted from the original approval, an HCP receiving hospitality across multiple events whose aggregate value crosses a Sunshine Act threshold, a rep whose call notes contain language that signals off-label discussion. Manual sampling catches none of this.

AI compliance monitoring for field operations reads every CRM interaction, every expense entry, every speaker program record continuously, not quarterly. It surfaces anomalies that don't trip any individual rule but are statistically significant: clustering patterns in HCP spend, repeat attendees across promotional events, call note language that deviates from approved messaging. The monitoring layer flags what matters; human reviewers decide what to do about it.

The output is not a larger list of exceptions for compliance teams to work through. It is a smaller, higher-confidence set of genuine risks — because the AI has already dismissed the false positives that sampling-based review either catches incorrectly or misses entirely.

PSP and patient program compliance

Patient support programs create a distinct compliance surface. A PSP that processes thousands of patient enrollments, benefit verifications, and product dispatches per month is generating continuous transaction data that most compliance functions never review. The risks are real: adverse event signals buried in coordinator call logs, consent lineage gaps that become DPDP or HIPAA exposure, PAP eligibility decisions that don't match documented criteria.

AI monitoring applied to PSP operations does three things that manual review cannot. First, it reads 100% of patient interaction records for adverse event language, not as a downstream PV activity, but as a real-time operational check. Second, it validates consent and eligibility decisions against documented SOPs at the point of processing, not at audit time. Third, it maintains an audit-ready log of every coordinator action and system decision, so inspection preparation is continuous rather than a six-week manual exercise.

A leading global pharma company managing oncology patient assistance across multiple markets reduced onboarding time by 60% and improved therapy adherence by 45% after moving to a platform with AI-native compliance monitoring built into the PSP workflow, not added as a separate governance layer on top of it.

Regulatory change to SOP: Closing the six-week gap

The compliance monitoring problem is not only transactional. It is also temporal. When the OIG issues an advisory, when EFPIA tightens hospitality limits, when FDA clarifies expectations for AI-generated promotional content, a compliance function running on rules engines and manual processes faces a predictable sequence: analyst reads the guidance, maps it mentally to affected programs, opens a change request, routes it through policy review. Six to twelve weeks from publication to updated SOP is typical. In that window, programs are running against outdated policy.

AI regulatory intelligence compresses this from weeks to hours. The model reads the regulatory update, identifies every impacted SOP, policy document, and active program in the company's corpus, and assigns action items to the right owners automatically. The compliance team does not need to discover the gap; they receive a structured impact summary and a draft revision for review.

This is not a monitoring capability in the narrow sense. But it is where AI compliance monitoring for pharma operations has its highest leverage, because the violation that happens in week four of a six-week SOP update cycle is a violation that no guardrail infrastructure would have caught.

Deep Dive: Sales Team Chatbots and the "Internal Co-Pilot"

Pharmaceutical sales representatives are increasingly supported by AI co-pilots, which are chatbots integrated into CRM systems that help reps plan calls, summarize interactions, and retrieve approved messaging. The primary objective here is Commercial Effectiveness, but the primary constraint is Compliance.

The "Free Text" Revolution and Compliance Monitoring

Historically, pharma companies have restricted sales reps from entering free-text notes in CRMs to avoid the risk of recording off-label discussions or unverified claims, which could be discoverable during litigation. Reps were forced to use "drop-down" menus, limiting the richness of data captured.

The AI Solution: AI compliance monitoring is unlocking the "free text" capability.

- Veeva's "Free Text Agent": Launching in late 2025, this agent analyzes text entry in real-time. It flags non-compliant phrases (e.g., "Doctor Jones loves using Drug X for [Off-Label Condition]") and prompts the rep to revise the note before saving. This allows companies to capture rich customer insights without creating a liability trail.¹⁹

- Exeevo Omnipresence: This CRM platform uses a "Conversational AI Assistant" and an "Advanced Reasoning Engine" to process user queries and automate tasks while ensuring compliance with organizational and industry regulations.²¹

Market Insight: The market for monitoring sales chatbots is essentially a market for enabling sales intelligence. Compliance monitoring is the "license to operate" for generative AI in the field. Without the ability to monitor and sanitize inputs/outputs in real-time, legal teams will not approve the deployment of GenAI sales assistants.

The "Pre-Call" Hallucination Risk

Sales chatbots often generate "Pre-Call Plans" suggesting what a rep should discuss with a doctor. This "Next Best Action" (NBA) capability is a core driver of sales efficiency.

- The Risk: If the AI suggests, "Dr. Smith sees many patients with Condition Y, so pitch Drug Z," but Drug Z is not approved for Condition Y, the AI has just instructed the rep to commit a federal crime (off-label promotion).

- Monitoring Mechanism: Compliance layers must verify that internal AI suggestions align with the external approved label. Tools like Aktana use "Contextual Intelligence" to ensure NBA recommendations are compliant and within strategy. The monitoring system acts as a "super-ego" to the "id" of the sales AI, suppressing high-risk suggestions.²²

- WhizAI: Provides "GenAI-Powered Conversational Analytics" that allows sales teams to query data using natural language. Its domain-tuned LLM ensures that the insights delivered are precise and compliant, avoiding the "hallucination" of market share data or performance metrics.²³

Vendor Landscape for Sales Compliance

Deep Dive: HCP Chatbots and Medical Information (MI)

HCP chatbots on drug websites (e.g., "Ask Pfizer" or brand-specific sites) serve as automated Medical Science Liaisons. They answer complex clinical queries, a function traditionally handled by call centers. The stakes here are even higher than in sales, as the information is directly clinical.

The "Medical Information" Barrier and Unsolicited Requests

Unlike sales bots, HCP bots often need to discuss off-label information if it is in response to an unsolicited request (a "safe harbor" in many jurisdictions). However, distinguishing between a compliant "unsolicited request" and a bot-prompted "solicitation" is nuanced.

- Monitoring Requirement: The AI must rigorously classify the intent of the HCP's query. If the query is off-label, the bot must deliver a standard, scientifically balanced response (Scientific Response Document - SRD) without "promoting" the use.

- Solution: Sorcero provides "Medical Affairs Intelligence" that uses "Prohibited Intent Detection." This safeguard automatically detects if a query (or the potential response) violates compliance policies, ensuring that the AI guides users to ask questions in a compliant manner or hands off to a human when the risk threshold is breached.¹⁵

- Eversana: Offers a "Medical Chatbot" integrated with its "Cognitive Core" platform. It allows for self-service access to SRDs and Prescribing Information (USPI), with built-in logic to connect users to live agents if a response is not approved for automated delivery.²⁶

Adverse Event Intake: The Critical Safety Valve

For HCP chatbots, the ability to recognize an Adverse Event (AE) is non-negotiable.

- The Mechanism: AE monitoring tools use Named Entity Recognition (NER) and specialized NLP models trained on safety databases (like FAERS) to identify symptom-drug pairs.

- Conversational PV: Research indicates that domain-specific BERT models (like "Waldo") can outperform general-purpose LLMs (like GPT-4) in detecting AEs from unstructured text. In the "Waldo" study, a fine-tuned RoBERTa model achieved 99.7% accuracy in detecting AEs, compared to 94.4% for a standard chatbot. The chatbot produced 18x more false positives.²⁷

- Implication: This data validates the market need for specialized PV monitoring layers. Relying on the base LLM's safety filters is insufficient for pharmacovigilance. Companies must layer a dedicated PV model (like Sorcero Safety or Eversana MedInsights) over the chat interface to ensure high-fidelity AE capture.

Citation and Grounding (The "Zero Hallucination" Standard)

A compliant HCP chatbot must cite its sources. "Trust but verify" is the operating model.

- Monitoring Solution: RAG (Retrieval Augmented Generation) architectures are standard. However, the monitoring layer verifies the link.

- ZoomRx Ferma: This platform focuses on "zero hallucination" by refusing to answer if the data isn't in its connected modules. It provides hyperlinked citations for every response, allowing the user (and the compliance monitor) to verify the source text immediately.¹⁸ This "refusal to answer" is a critical compliance feature, a silent bot is better than a lying bot in pharma.

- Pfizer's "Health Answers": This consumer/HCP facing tool uses generative AI to summarize information from trusted, independent sources. It emphasizes transparency by labeling answers as "unverified" if they don't rely on independent sources, demonstrating a robust compliance guardrail implementation.²⁸

AI in Pharmacovigilance: Automating Adverse Event Intake and Signal Detection

While commercial compliance focuses on what is said, pharmacovigilance (PV) focuses on what is heard. The market for AI monitoring in PV is driven by the sheer volume of unstructured data generated by chatbots and social listening.

The "Intake" Bottleneck

Traditional PV relies on human data entry professionals to read emails, listen to call recordings, and manually code AEs into databases like Argus. This process is slow, expensive, and error-prone.

- AI Automation: AI tools can automate the "intake" process. AiRo Labs deployed a voice-enabled chatbot for a major life sciences company that processed 200,000 AE cases annually, improving real-time reporting by 73% and reducing compliance risk by 25% by eliminating human error.⁸

- Visium: Helped a pharma client develop an ML pipeline to monitor social media for AEs, addressing the issue of "model drift" where the accuracy of detection dropped in production. Their solution provided a transparent, compliant workflow for patient safety experts.²⁹

"Waldo" and the Superiority of Specialized Models

The "Waldo" study ²⁷ provides empirical evidence that specialized, smaller models (SLMs) are superior to large generative models for compliance monitoring tasks like AE detection.

- Key Finding: The specialized model had an F1-score of 95.1% for positive AE detection, whereas the generic chatbot only achieved 38%.

- Market Implication: This suggests that the "Compliance Monitoring" market will favor vendors who build purpose-built discriminative models (classifiers) rather than those who simply prompt-engineer a GPT-4 wrapper. The "Agent" that watches the chatbot will likely be a different, more specialized AI than the chatbot itself.

Competitive Landscape: Vendors and Platforms

The market is stratified by the depth of pharmaceutical specialization. We identify four tiers of vendors competing for the compliance monitoring budget.

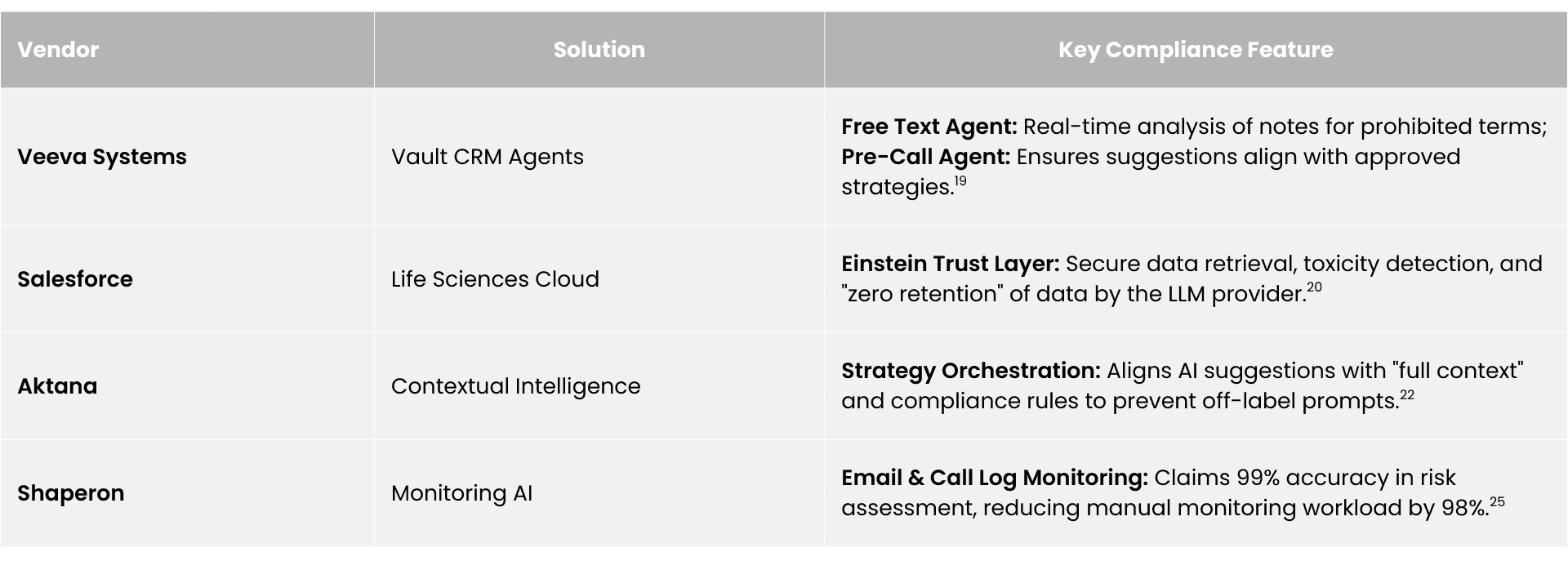

Tier 1: Pharma Native Platforms (The "Walled Gardens")

- Key Players: Veeva Systems, IQVIA, Salesforce.

- Value Proposition: Deep integration with data/content (Vault, OCE). Compliance is "baked in."

- Strengths: High trust; "One-stop-shop"; Access to the "single source of truth" (approved content).

- Weaknesses: Vendor lock-in; Can be expensive; Slower innovation cycles than startups.

- Flagship Capabilities: Veeva's Vault CRM Agents (Free Text, Pre-Call)¹⁹; Salesforce's Einstein Trust Layer.²⁰

Tier 2: Vertical AI Specialists (The "Domain Experts")

- Key Players: Sorcero, WhizAI, Aktana, ZoomRx (Ferma), Eversana.

- Value Proposition: AI-first vendors dedicated to Life Sciences workflows.

- Strengths: Domain-specific ontologies; High accuracy in medical language; rapid deployment of specific use cases (e.g., MI chatbots).

- Weaknesses: Integration friction with core systems; Smaller scale.

- Flagship Capabilities: Sorcero's Medical Affairs Intelligence¹⁵; WhizAI's Conversational Analytics²³; Aktana's Contextual Intelligence.²²

Tier 3: Regulatory & Compliance AI (The "Auditors")

- Key Players: Norm.ai, Helio, Shaperon.

- Value Proposition: Specialized "RegTech" focusing purely on the legality and auditing of AI outputs.

- Strengths: "Lawyer-in-the-loop" architectures; specialized agents for regulatory logic; audit-centric.

- Weaknesses: Niche focus; requires integration into the chat workflow.

- Flagship Capabilities: Norm.ai's Regulatory AI Agents that convert laws into code¹⁰; Helio's Communications Monitoring.³⁰

Tier 4: Horizontal AI Security (The "Shields")

- Key Players: Lakera, Guardrails AI, NVIDIA NeMo, Monitaur.

- Value Proposition: General purpose LLM security and observability.

- Strengths: Best-in-class security (injection defense); scalable technology; low latency.

- Weaknesses: Lacks pre-built understanding of pharma regulations (e.g., 21 CFR); requires heavy customization.

- Flagship Capabilities: Lakera's Gandalf red-teaming dataset¹¹; NVIDIA NeMo Guardrails.³¹

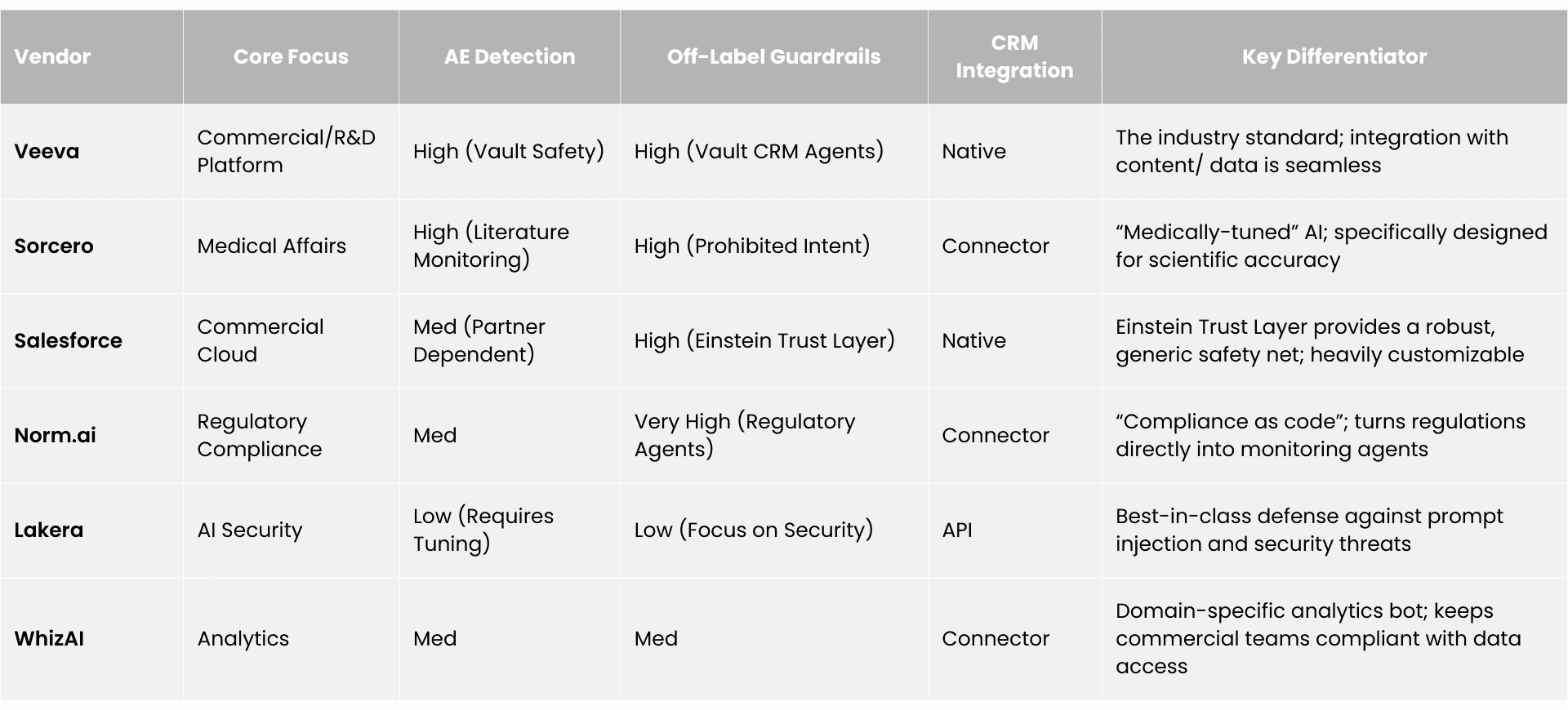

Comparative Vendor Analysis Table

The AI compliance monitoring market segments into four tiers:

- Tier 1 (Pharma-Native Platforms) includes Veeva Systems, IQVIA, and Salesforce — offering deep CRM integration and compliance "baked in" but with vendor lock-in and slower innovation.

- Tier 2 (Vertical AI Specialists) includes Sorcero, WhizAI, Aktana, ZoomRx (Ferma), and Eversana — AI-first vendors with domain-specific biomedical ontologies and high accuracy in medical language but smaller scale.

- Tier 3 (Regulatory and Compliance AI) includes Norm.ai, Helio, and Shaperon — specialized RegTech with "compliance as code" and audit-centric architectures.

- Tier 4 (Horizontal AI Security) includes Lakera, Guardrails AI, NVIDIA NeMo, and Monitaur — general-purpose LLM security with best-in-class prompt injection defense but no pre-built pharma regulatory understanding.

Companies typically assemble a "defense-in-depth" strategy combining solutions across multiple tiers.

Agentic AI Compliance Monitoring: From Flagging Violations to Fixing Them

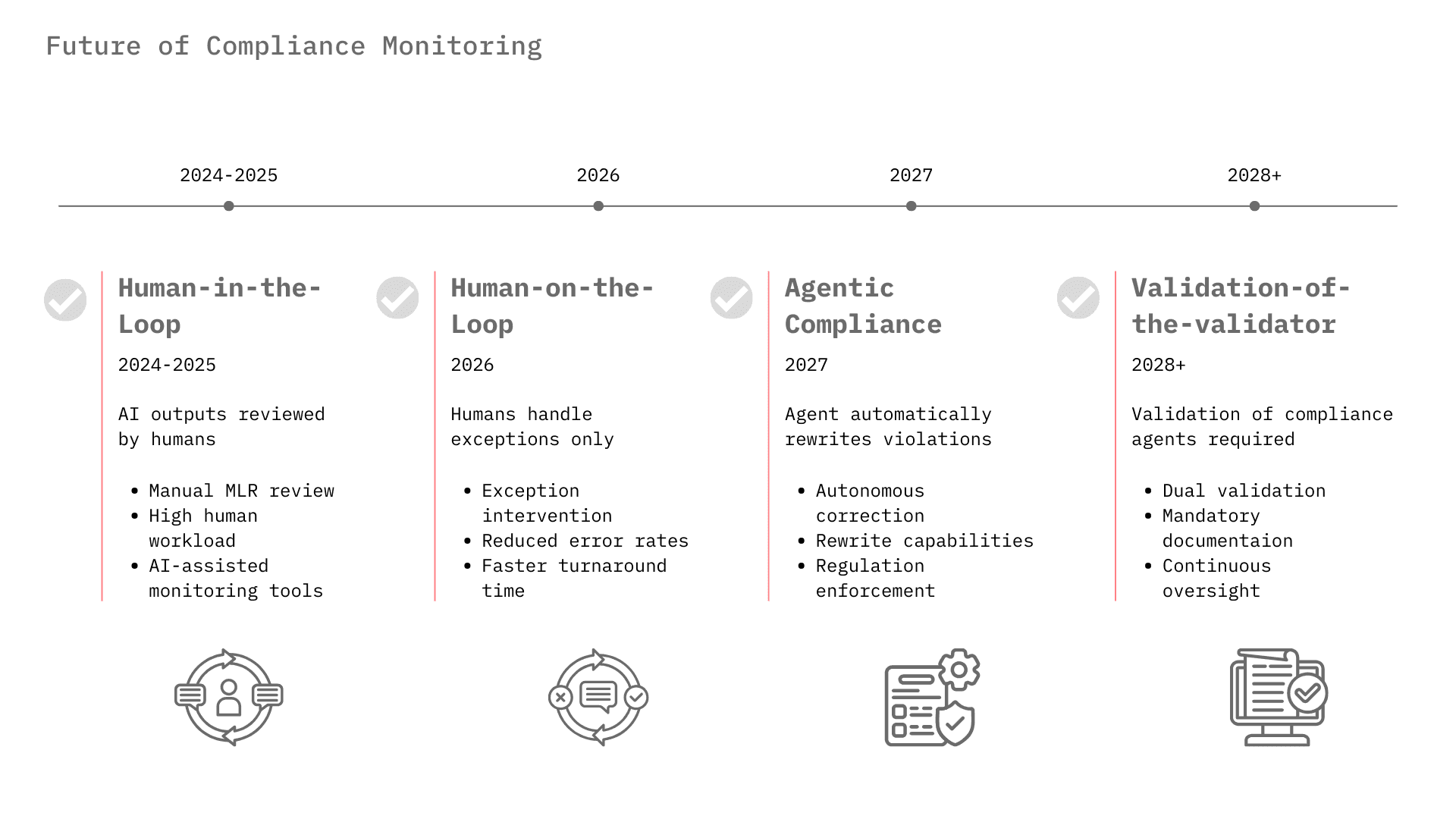

From "Human-in-the-Loop" to "Human-on-the-Loop"

Currently, most pharma AI deployments require a human to review outputs, known as Human-in-the-Loop, due to trust issues. For example, MLR review of marketing materials is still heavily manual, though AI is beginning to pre-screen content.³² As compliance monitoring algorithms improve and demonstrate error rates lower than human reviewers, the industry will shift to Human-on-the-Loop, where the AI operates autonomously and humans manage only the exceptions flagged by the monitoring layer.

The Rise of "Agentic Compliance"

We are moving beyond passive monitoring to Agentic Compliance. In this model, a "Compliance Agent" doesn't just flag a violation; it fixes it. If a sales rep's draft email contains an off-label claim, the Compliance Agent will rewrite it to be compliant and present the revision for approval. Veeva's roadmap for "Free Text Agents" and Norm.ai's capabilities point directly to this future.¹⁰

Regulatory Harmonization and the "Validation of the Validator"

As the EU AI Act comes into force (classifying some medical AI as "high risk") and the FDA finalizes its AI guidance, the "Compliance Monitoring" market will become a mandatory infrastructure layer, similar to cybersecurity. Companies will need to prove not just that their AI works, but that their monitoring of the AI works. This concept, "Validation of the Validator," will become a key requirement for GxP (Good Practice) compliance.

These AI monitoring challenges build on foundational compliance requirements — including audit trails, documentation integrity, and GxP standards. For the baseline framework, see our guide to clinical trial compliance and documentation best practices.

The three architectural layers covered in this report, guardrails, semantic agents, and platform-native governance are necessary components of a complete AI compliance stack. But they address the output layer. The operational layer, field conduct, patient program transactions, and regulatory change response requires a platform that monitors the whole business, not just what the chatbot says.

For a complete evaluation framework across all six compliance capabilities, regulatory intelligence, continuous monitoring, policy governance, AI copilot, purpose-built systems, and transparency reporting, see how Zelthy's compliance intelligence platform is built for pharma operations.

Conclusion

The market for AI output compliance monitoring in pharma is transitioning from a "nice-to-have" innovation project to a critical "license-to-operate" infrastructure. The proliferation of HCP and sales chatbots is currently throttled by compliance risks, specifically hallucination and off-label promotion.

For Sales Team Chatbots, the market is consolidating around platform-native solutions (Veeva, Salesforce) that integrate monitoring directly into the CRM workflow to enable capabilities like free-text capture. For HCP Chatbots, specifically in Medical Information, there is a vibrant market for specialized vendors (Sorcero, Eversana) that can guarantee scientific accuracy and adverse event detection.

Investors and stakeholders should prioritize technologies that offer Semantic Compliance, the ability to understand the meaning of regulations and apply them to conversation, over simple keyword filtering. The winners in this space will be those who can provide the Glass Box, which offers verifiable and audit-ready proof that the AI remained within the guardrails of the law. As pharma races to deploy the engine of Generative AI, the value of the brakes, the compliance monitoring layer, is skyrocketing.

Frequently Asked Questions

What is AI output compliance monitoring in pharma?

AI output compliance monitoring is the continuous surveillance, auditing, and governance of AI-generated content in pharmaceutical operations, particularly LLM-powered chatbots and HCP-facing agents, to ensure every output meets FDA, EMA, and local regulatory requirements for truthfulness, fair balance, and adverse event reporting. It is becoming a mandatory component of the pharma commercial tech stack as generative AI bypasses traditional pre-approval review processes.

Why can't pharma rely on standard MLR review for AI-generated content?

Traditional Medical-Legal-Regulatory (MLR) review governs static assets before dissemination — detail aids, print ads, email templates. AI chatbots generate probabilistic responses in real time, bypassing pre-approval containment entirely. Because each interaction can produce a novel output, MLR review cannot occur before deployment. This requires a fundamental shift from pre-approval review to real-time monitoring infrastructure.

What are the FDA's enforcement powers over pharma AI chatbots?

The FDA's Office of Prescription Drug Promotion (OPDP) holds authority over all promotional communications, including AI-generated content. If an HCP chatbot fabricates a clinical result or implies efficacy in an unapproved indication, the manufacturer faces strict liability for misbranding under the FD&C Act. The FDA has committed to deploying its own AI tools to proactively surveil digital promotional content, including chatbot interactions, significantly raising the probability of detection for non-compliant outputs.

What is the 'fair balance' requirement for pharma chatbots?

Fair balance requires that risk information be presented with equal prominence to benefit claims. In chatbot interactions, this means a system responding to an efficacy question must simultaneously surface relevant safety warnings; it cannot bury risk information in a dense paragraph or offload it to a hyperlink. The FDA's Clear, Conspicuous, and Neutral (CCN) standards require that safety information be integral to the interaction, not secondary.

What are the three architectural layers of pharma AI compliance monitoring?

Pharma AI compliance monitoring is structured in three layers: (1) Real-time guardrails — infrastructure-level tools (e.g., Lakera, NVIDIA NeMo) that block PII, prevent prompt injection, and enforce operational boundaries at the point of inference; (2) Semantic and regulatory agents — vertical specialists (e.g., Sorcero, Norm.ai) that detect off-label promotion, verify fair balance, and flag adverse events; (3) Platform-embedded compliance — CRM incumbents like Veeva and Salesforce integrating monitoring natively into commercial workflows.

How does AI compliance monitoring handle adverse event detection?

Chatbot interactions with HCPs or patients inevitably surface health data, a patient mentioning a symptom following medication use constitutes a potential adverse event (AE) that must be reported within FDA-mandated timelines (15 days for serious, unexpected events). AI compliance monitoring systems must recognize AE language in unstructured chat text, classify the event, capture reporter details, and route data to pharmacovigilance databases. Manual log review is operationally infeasible at scale, making automated AE intake a core monitoring requirement.

What does a 'glass box' audit trail mean in pharma AI governance?

A glass box audit trail documents not just what an AI system blocked or modified, but why, citing the specific regulatory statute or business rule that triggered the action. For example: "Response blocked because it implied efficacy in a pediatric population, contradicting Section 4.1 of the USPI." This level of explainability is required to respond to FDA inquiries or DOJ investigations, and differentiates compliant governance systems from simple content filters.

References

- FDA begins crackdown on Direct-to-Consumer pharmaceutical advertising. (2025, September 24). Latham & Watkins.

- Fact Sheet : Ensuring Patient Safety Through Reform of Direct-to-Consumer Pharmaceutical Advertisement Policies. (2025, September 9). US Department of Health & Human Services.

- Combs, K. B., Levine, G. H., Oyster, J., & Stain, P. (2025, September 12). The Administration Targets Direct-to-Consumer Prescription Drug Advertising. Ropes & Gray.

- 3 Ways to leverage Generative AI in Pharmacovigilance. (2024, August 1). TransPerfect Life Sciences.

- AI in Drug Safety: Building the Elusive ‘Loch Ness Monster’ of Reporting Tools. (2023, May 19). Pfizer Inc.

- Laurent, A. (2026, January 21). AI Agents in Pharmacovigilance: A Technical Overview. IntuitionLabs.

- Automating adverse events reporting for pharma with Amazon Connect and Amazon Lex. (2023, February 16). Amazon Web Services.

- Streamlining Adverse Event Call Processing with Conversational AI at a Major Life Sciences Company. (2024, November 11). Airolabs.ai.

- Survey: 65 Percent of Pharma Marketers Distrust AI for Creating Regulatory Compliance Submissions. (2025, November 25). Business Wire.

- Norm Ai Platform. Norm Ai.

- Lakera Guard: Real-Time Security for your AI agents. Lakera.

- Shah, D. (2025, September 8). Top 12 LLM Security Tools: Paid & Free (Overview). Lakera.

- Ama, E. B. (2025, May 21). Chatbot Security Essentials: Safeguarding LLM-Powered Conversations. Lakera.

- Lakera Guard AI Security Review: Protect Your AI Systems. (2025, November 3). TutorialsWithAI.

- Sorcero Announces AI Guardrails, AI-Native Data Capture, and Insights Analytics to Transform Life Sciences Workflows. (2025, October 16). PR Newswire.

- Sorcero Platform | AI & Data Intelligence Transforming Life Sciences. Sorcero.

- Norm Ai | Turn Legal & Compliance into a Strategic Advantage. Norm Ai.

- Ferma Agents | Market intelligence that works for you. Ferma Agents.

- Veeva AI for Vault CRM: Drive Commercial Efficiency and Effectiveness. Veeva Systems.

- Decoding Salesforce's AI Bet: What It Means for Life Sciences Commercial Success. (2025, April 25). Indegene.

- Life Sciences CRM | Exeevo. Exeevo.

- Aktana | AI for Life Sciences | Smarter HCP Engagement. Aktana.

- IQVIA | Analytics, Insights & Reporting. IQVIA.

- Patient Services - WhizAI, an IQVIA Business. WhizAI.

- Shaperon | Safeguarding pharmaceutical industry trust through advanced AI Monitoring. Shaperon.

- Eversana | Medical Affairs Digital Engagement and Innovation. Eversana.

- Desai, K. S., et al. (2025). Waldo: Automated discovery of adverse events from unstructured self reports. PLOS Digital Health, 4(9), e0001011. doi:10.1371/journal.pdig.0001011

- Introducing HEALTH ANSWERS BY PFIZER: a new consumer digital product providing answers you can trust in the moments that matter most. (2025, February 11). Pfizer Inc.

- How we helped a leading pharma company use LLMs to monitor adverse events on social media. (2025, November 4). Visium.

- AI-Powered Compliance Monitoring Solutions for Life Sciences. Helio Group.

- Banerjee, S., & Arora, M. (2025, September 18). Practical Guardrails in AI: types, tools, and detection libraries. Tredence.

- Calero, M. (2025, October 2). Faster Pharma Content Approvals with AI: What Pre-Screening Means for Compliance. Ciberspring.